Videos and Hands-On:

Introduction to Computing Technologies

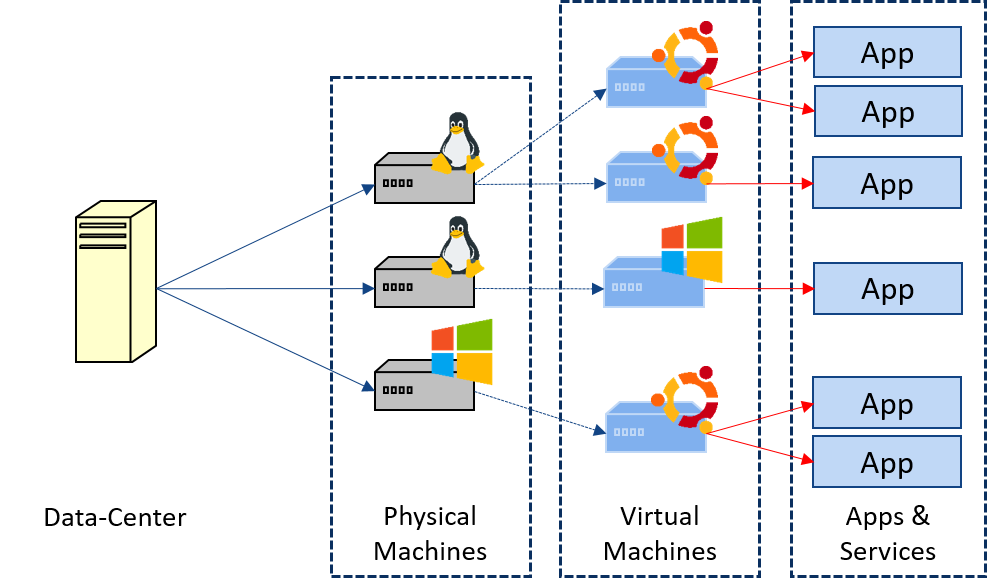

The first part of the course focuses on Data-Centers and High Performance. Understanding concepts like "performance" and "resources" are fundamental when running any experiment or application in computing systems. Experiments and applications run faster and more efficiently when the proper resources are provided, and most of them can be parallelized in order to complete as much experiments as possible in the minimum amount of time when resources are available. In this part we'll see the fundamentals of resources and performance, also practical concepts on parallelism, the Cloud and virtualization. With this knowledge we expect researchers and professionals to plan their experiments, executions and environments, optimizing their time and their computing resources.

Overview of ML techniques

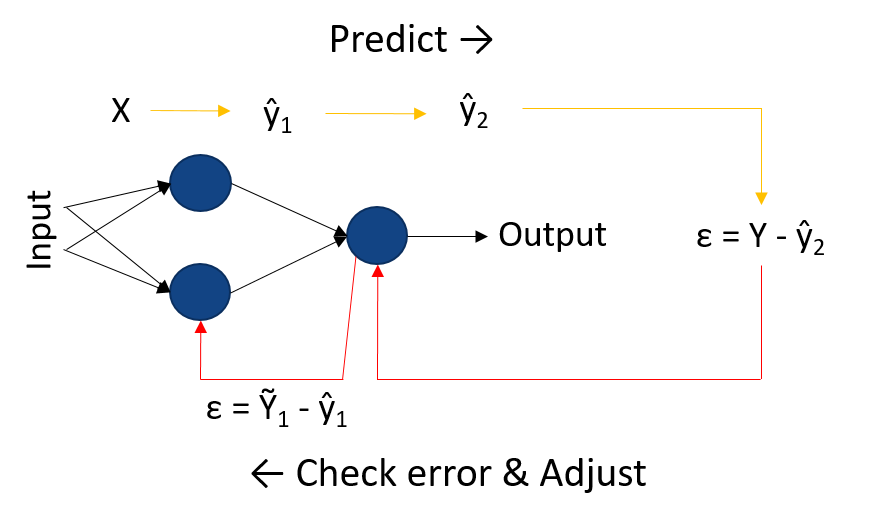

The second part of the course focuses on Machine Learning and Data Science, as a quick overview of what is AI and ML, the different techniques and how they work, and some examples of algorithms. In this part we'll see supervised learning techniques (modeling and prediction), unsupervised learning techniques (clustering, reinforcement learning and streams), also the fundamental of neural networks. Knowing the different techniques will help to understand the requirements of those algorithms, for when running experiments in a data-center or computing system.

Distributed computing using Spark and BigDL

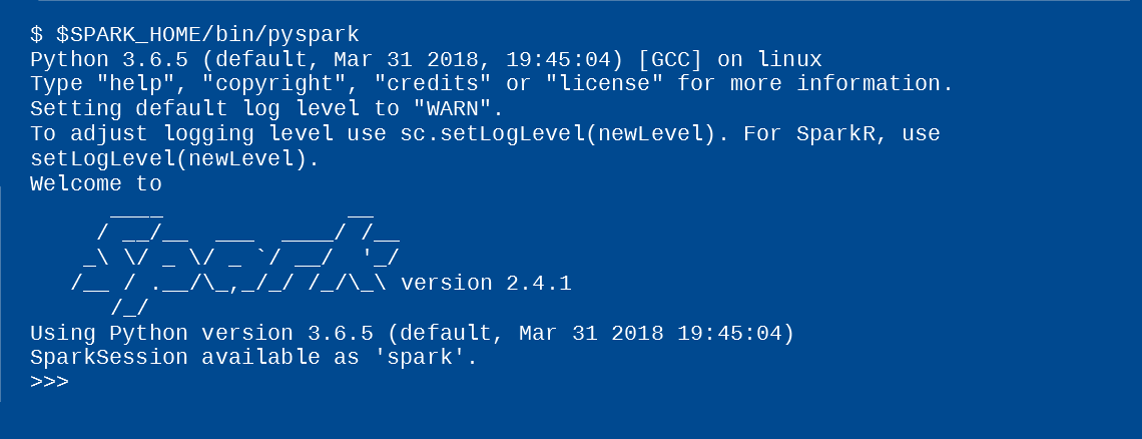

The third part of the course focuses on the use of the frameworks Apache Spark and Intel BigDL, explaining how to install them and how to run experiments. Here we will practice with some examples of processing data with Spark, aggregating data with SparkSQL, modeling data with SparkML and performing Deep Learning with the Intel BigDL library for Spark. Those parts marked as "Hands-On", are parts showing Spark and BigDL in action, and the students can try them at home with their computers or equipment. In the "code" section, you will find helpful scripts to install and configure the environment for these presented technologies, also for the hands-on and other demos.

Copyright © Barcelona Supercomputing Center, 2019-2020 - All Rights Reserved - AI in DataCenters